Old MLT experiments

These are some very old MLT tests that I found on my hard drive today.

Metropolis Light Transport (MLT) is a global illumination variant of the Metropolis-Hastings algorithm for Markov-Chain Monte Carlo (MCMC).

In a nutshell, MCMC algorithms explore the neighborhood of a Monte Carlo sample by jittering (mutating) said sample. A metric is then used to define how good a sample is, so the next mutation is accepted or rejected based on how good it is compared to the current sample. This leads to a random-walk in the space of samples which tends to crawl up in the increasing direction of the metric used.

This method biases sampling towards successful samples. So a bit of statistics juggling is required to counter that bias. The link below is a seminal paper that beautifully describes the nuts and bolts of this process. The idea presented there is to jitter samples, not in sample space directly, but in quasi-random number tuples space instead:

Simple and Robust Mutation Strategy for Metropolis Light Transport Algorithm

In the particular case of MLT, samples are random light paths, and the metric used is the amount of light energy transported by a path. This can be implemented on top of regular Path Tracing. Arion was the first (?) commercial render engine that did this on the GPU back in 2012 or so.

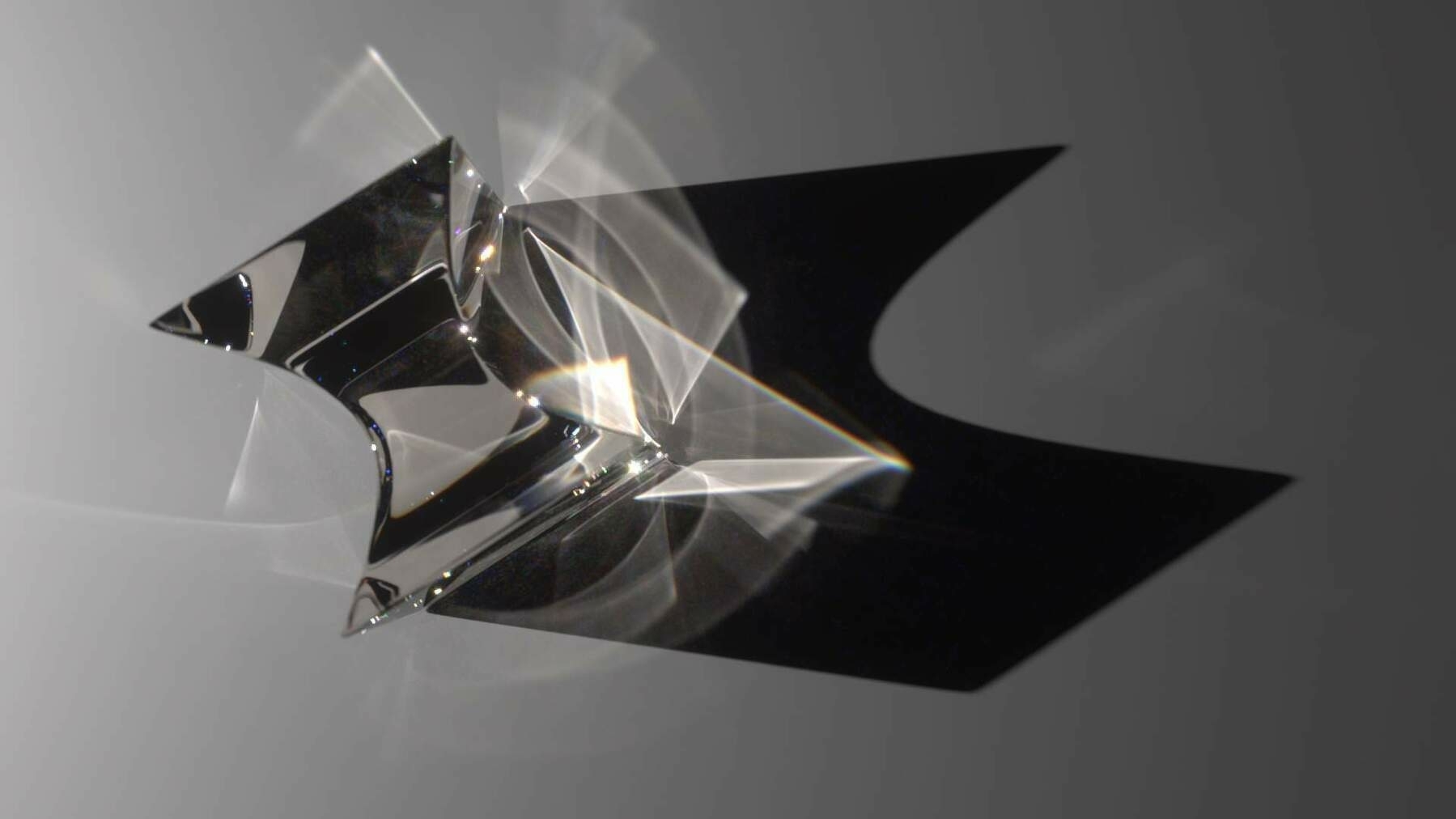

MLT has fallen out of favor over the years (path guiding is all the rage these days). But MLT proved to be a competent universal solution to global illumination, being capable of boosting path tracing so it could efficiently solve even the hardest cases, such as refractive caustics.

The beauty of MLT is that it requires 0 configuration from the user and does not need any additional memory for data structures. The main drawbacks are the ugly splotchy and non-uniform look of noise, as compared to regular path tracing, and the inability to guarantee noise stability across animation frames.

Experiments in 2D

Before I implemented MLT in Arion, I did some MCMC simulations with 2D sampling. In the videos below I used the (x,y) coords of pixels as samples, and the grayscale luminance Y of the image as the metric. The goal of the mutations here is to reconstruct the image by sampling harder in the directions of higher luminance.

MCMC is prone to getting stuck for long amounts of time in local maxima. The practical solution proposed in the above paper is to introduce a plarge probability that kicks the sampling scheme and sends the next sample to a purely random position in the sample space. The videos below visualize this very well I think.

Mutating in QRN tuple space without plarge

Mutating in QRN tuple space with plarge

MLT reconstructing a grayscale image

Implementing MLT successfully in our render engine did require some specialization in our sampling routines. I won’t get into the details, but basically, since mutations happen in the random tuples that generate the paths, you expect continuous mutations in the tuples to produce continuous changes in the generated paths. So all samplers involved must avoid discontinuities in the [QRN<->path] bijection.

Back in time

Some MLT-heavy images rendered in Arion back in the day: